The complexity of conversation

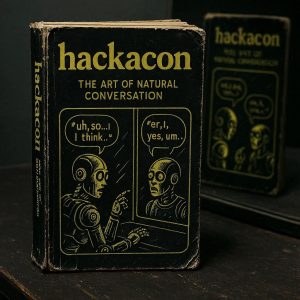

AI cannot yet generate spontaneous, natural-sounding conversation. Why not?

If you listen to conversations around you the way a linguist does, you’ll notice that they are full of repetitions, false starts, ungrammatical choices, hesitations, overlaps, interruptions, sniffs, coughs, stutters, digressions, distractions, tempo changes, volume shifts, sighs, laughs, and far more besides.

But that’s not all. If we’re talking to someone we like and their voice is a little different to ours, e.g. more regional, or slower, or quieter, we may do something called accommodation where we slightly reduce the differences between us by, e.g. slowing or quietening down a little bit. It’s a subtle way of moving behaviourally closer as a signal that we like them. And if we don’t, we may maintain our differences, make them greater, or in rare cases, we may even deliberately over-accommodate to parody and mock them.

And that’s still not everything. Navigating conversation is remarkably complex. Just try speaking whenever you like rather than waiting for the right moment to get in. What we’re talking about here is known as a TRP – a transition relevant place. Generally we learn from a very early age to detect TRPs so that we can jump in without being rude, but plenty of people for various reasons simply don’t pick up this skill, and it results in some difficult social consequences.

There are also moments where we’re expected to provide back-channel markers, the “mmm” and “yeah” noises that reassure someone we’re still engaged. If these sound trivial, try having a conversation where you don’t offer any of these and see how many seconds it takes before the other person comes to a standstill or even asks what’s wrong.

There are pauses that shouldn’t be filled and pauses that should, interruptions that are very welcome and interruptions that are obnoxious, overlap that signals support and overlap that is just someone trying to hog the airwaves.

However effortless conversation is to some of us (certainly not everyone!), it is a highly complex social behaviour with a lot of layers – and we haven’t addressed the fact that in HackaCon, your spontaneous natural conversation is meant to be between two specific people.

Surely, though, if we have ChatGPT, we’re there, right? It has “chat” in the name, after all…

Large Language Model, not Large Conversational Model

This is where we get to the issue – the training data. AI has largely been trained on transcripts of speech. In plenty of cases, this involves audio of natural monologues and dialogues being transcribed by speech-to-text software, but this (a) doesn’t capture (or even deliberately removes) many natural conversational features, and (b) it often doesn’t “diarise” (i.e. assign Speaker A, Speaker B to) different voices, or it doesn’t do so particularly accurately, especially where there are more than two speakers and/or lots of background noise. Worse, LLMs are often trained on scripted dialogue from TV shows, movies, political speeches, pre-written podcast episodes, and so forth. And those are very poor, stylised approximations of conversation with an awful lot of those spontaneous, natural features stripped out.

You might argue that someone with a particularly creative flair could just write their own dialogue, and perhaps even act it out and use AI to convert the voices. This is certainly plausible – but only if the script-writing and acting really is top notch, and both of these are much, much harder than you think. As a quick test to yourself, see how readily you can determine TV/movie conversations from the real deal. There’s a difference to acted dialogue that’s very difficult to eradicate, even when it’s trying its hardest to sound natural.

Moreover, this assumes that your writers and actors are native speakers of the language in question, but what if your target speaks another language? For transnational cybercrimes and hostile state operations, the risks of being picked up on a non-native speaker disfluency are too high. This could potentially reveal the origins of the attack. Rather than including a fluent accomplice who may later turn on you, it’s arguably more strategic to let the AI produce a (seemingly) flawless script with no unwitting clues buried in it.

Where are we now?

Non-interactive dialogue is the critical step between where we are right now and where AI-enabled crime is very likely heading in the future:

- Non-interactive monologue (e.g. a voicenote) <- this problem is mostly solved

- Non-interactive dialogue (e.g. a recording of a conversation) <- we are here; welcome to HackaCon

- Interactive dialogue (e.g. a live conversation) <- this is the (near?) future

You might then say, “Yes but the Arup heist involved a live conversation with several participants” and you might point out that lots of voice-enabled cybercrime seems to have happened via real-time phone calls. We would agree… to an extent. But a pattern across most of these cases is that they did not involve spontaneous conversation.

Such attacks largely rely on using pre-recorded chunks – yes/no responses, credentials, demands, etc. – where the attacker either knew the callcenter script beforehand or very deliberately controlled the interaction with the target to ensure that the conversation stayed within strict boundaries. No spontaneity. No creativity. No conjuring up novel answers on the spot.

This is because current “interactive” generative AI systems have major latency issues under these kinds of conditions and they leave gaps that are, in human terms, far too long to fit natural conversation. For humans, a gap of just one second is noticeable. Two seconds means there’s a big problem. Three seconds usually prompts us to check if the other person heard us or if they’re okay. Generative AI simply can’t work to our timescales, and suspicious pauses are likely to spell the end of most social engineering cybercrime efforts.

All this should start to answer the following question…

Why should we care?

In terms of security, this development is critical. Social engineering (some like to call it human effects) is a key factor in cybercrime. The system can have the most futuristic quantum cryptography in the world but if the human in charge of it is convinced into handing over the keys, all that architecture is meaningless. Whilst technological defences can be continually hardened, the human operating system hasn’t changed in hundreds of thousands of years. Just as old-school burglars learned to ignore the locks and focus on the hinges, and millennium thefts transferred from forcing car doors to stealing the keys, cybercriminals are recognising that attacking a well-maintained, robust system is likely a much tougher challenge. By contrast, a SysAdmin may not realise (quickly enough) that their latest dating app match is not only interested in quiet walks on the beach and lazy weekends, but also in accessing the corporation that their employer supplies. And people are much more likely to be indiscreet in private conversation than they are in text, especially if they’re chatting with a sweetheart they’d like to impress…

But even before we arrive at full, live, spontaneous, human-like conversations with AI, deepfakes of non-interactive dialogue present us with other difficulties, and just one of those is evidence.

Evidential integrity

Historically, investigations of all kinds have turned to CCTV, doorbell footage, voicenotes, and other similar recordings to provide an objective, neutral witness, but we are already seeing the first cases of suspects claiming that incriminating recordings are deepfakes.

If, for instance, a police force is accused of framing a suspect, is it possible to show that generative AI is extremely unlikely to be able to achieve the sample in question at this time? A key part of the solution will, of course, be in proving the provenance of any sample under dispute, but given how exhaustive this defence and the processes around it could become, another part of it may well be in establishing the limits of generative AI – as they stand right now.

In short, HackaCon is about understanding where we are in this middle-ground, and how quickly we will move towards spontaneous, human-like, conversational generative AI models – including the kind that could charm your credentials right out of you.